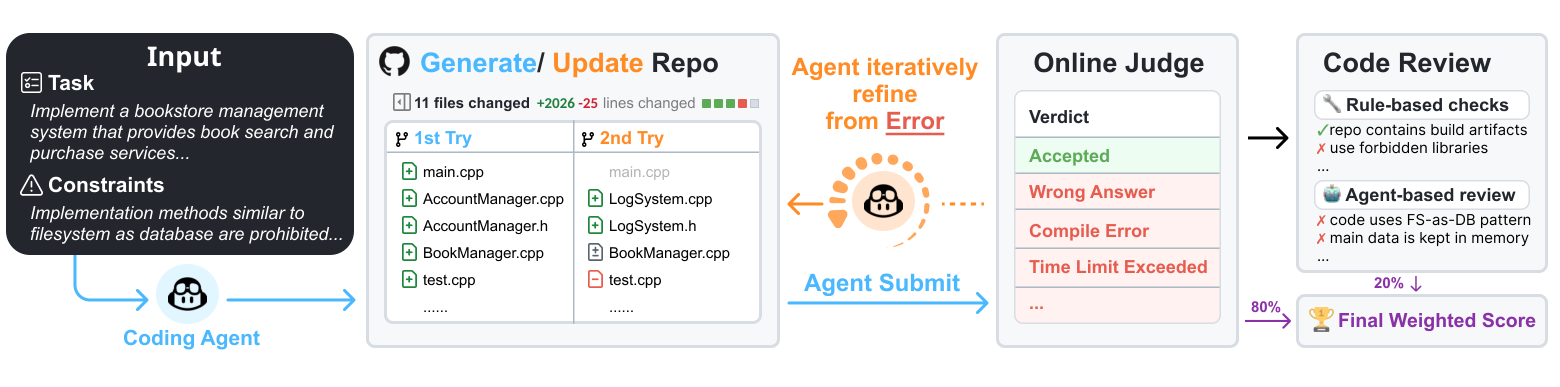

Performance on ProjDevBench across six coding agents and multiple LLM backends. Exec. represents the execution score from Online Judge, CR represents the code review score, and Final is the weighted combination (80% Exec. + 20% CR).

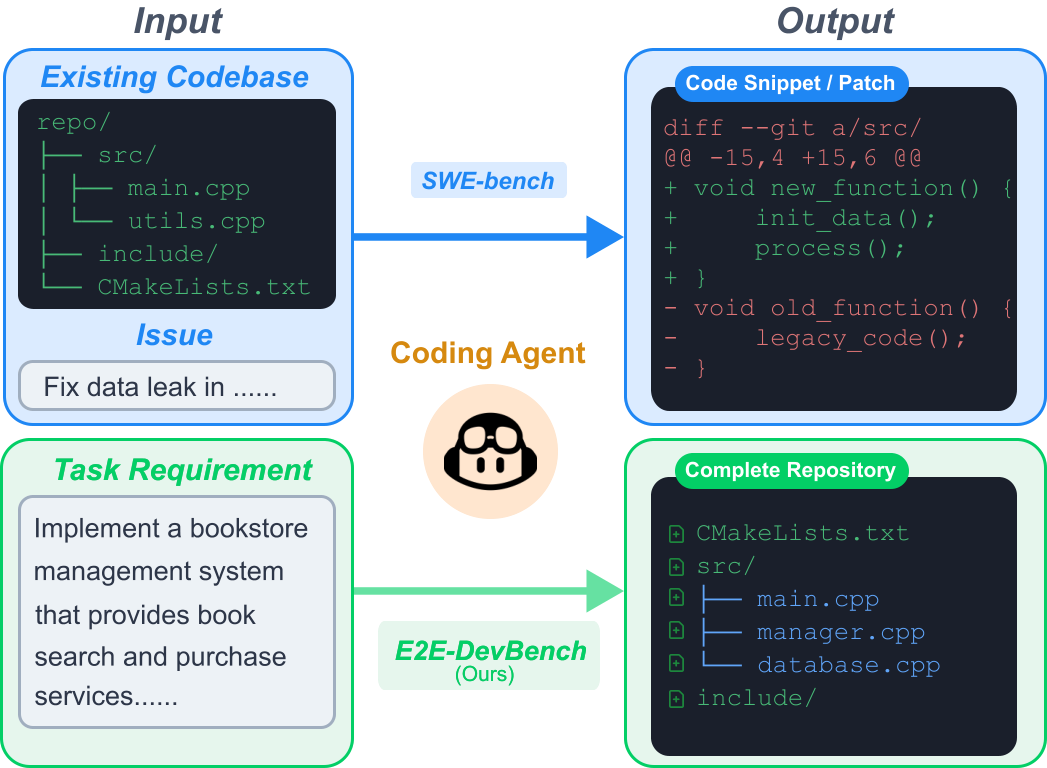

Task comparison: Unlike benchmarks where coding agents modify code snippets from pre-existing codebases, ProjDevBench evaluates end-to-end repository construction from project-level requirements.